The End of the Road for “The More Data The Better” Assumption?

It has been commonly understood by many in the world of statistical MT, that success with SMT is based almost completely on the volume of data that you have. The experts at Google, ISI and TAUS have all been saying this for years. There has been some discussion that questioned this “only data matters” assumption but largely many in the SMT world continue to believe the statement below, because to some extent it has actually been true. Many of us have witnessed the steady quality improvements at Google Translate in our casual use to read an occasional web page (especially after they switched to SMT), but for the most part these MT engines rarely rise above gisting quality.

"The more data we feed into the system, the better it gets..." Franz Och, Head of SMT at Google

However, in an interesting review of the challenges of Google’s MT efforts in the Guardian, we begin to see some recognition that MT is a REALLY TOUGH problem to solve with machines, data and science alone. The article also quotes Douglas Hofstader who questions whether MT will ever work as a human replacement, since language is the most human of human activities. He is very skeptical and suggests that this quest to create accurate MT (as a total replacement for human translators), is basically impossible. While I too have serious doubts whether machines will ever learn meaning and nuance at a level that compares with competent humans, I think we should focus on the real discovery here, i.e. more data is not always better and/or that computers and data alone are not enough. MT is still a valuable tool, and if used correctly can provide great value in many different situations. The Google admission according to this article is as follows:

“Each doubling of the amount of translated data input led to about a 0.5% improvement in the quality of the output,” and "We are now at this limit where there isn't that much more data in the world that we can use." Andreas Zollmann of Google Translate.

But, Google is hardly throwing in the towel on MT, they will try “to add on different approaches and (explore) rules-based models."

Interestingly, the “more data is better” issue is also being challenged in the search arena. In their zeal to index the world’s information, Google attempts to crawl and index as many sites as possible (because more data is better, right?). However, spammers are creating SEO focused “crap content” that increasingly shows up at the top of Google searches. (I experienced this first hand myself, when I searched for widgets to enhance this blog. I gave up after going through page after page of SEO focused crap.) This article describes the impact of this low-quality content created by companies like Demand Media and are summarized succinctly in the quote below.

Searching Google is now like asking a question in a crowded flea market of hungry, desperate, sleazy salesmen who all claim to have the answer to every question you ask. Marco Arment

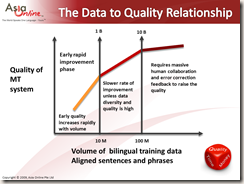

But getting back to the issue of data volume and MT engine improvements, have we reached the end of the road? I think this is possibly true for some languages, i.e. data-rich languages like French, Spanish and Portuguese, where it is quite possible that tens of billions of words underlie the MT systems already. It is not necessarily true for sparse-data, or less present languages on the net (pretty much anything other than FIGS and maybe CJK), and we will hopefully see these other languages continue to improve as more data becomes available. In the graphic below we can see a very rough and generalized relationship between data volume and engine quality. I have a very rough estimate of the Google scale on top, and a lower data volume scale for customized systems at the bottom (that are generally focused on a single domain) where less is often more.

Ultan O’Broin provides an important clue (I think anyway) for continued progress: “There's a message about information quality there, surely.” At Asia Online we have always been skeptical of the “the more data the better” view and we have ALWAYS claimed that data quality is more important than volume. One of the problems created by large scale automated data-scraping is that it is more than possible to pick-up large amounts of noise and digital dirt or just plain crap through this approach. Early SMT developers all use crawler based web-scraping techniques to acquire the training data to build their baseline systems. We have all learned by now I hope, that it is very very difficult to identify and remove noise from a large corpus, since by definition noise is random and unidentifiable through automated cleaning routines which can usually only target known patterns. (It is interesting to see that “crap content” also undermines the search algorithms, since machines (i.e.spider programs) don’t make quality judgments on the data they crawl. Thus Google can, and does easily identify crap content as the most relevant and important content for all the wrong reasons as Arment points out above.)

Though corporate translation memories (TM) can be of higher quality than web-scraped data sometimes, TM also tends to gather digital debris over time. This noise comes from a) tools vendors who try to create lock-in situations by adding proprietary meta-data to the basic linguistic data, b) the lack of uniformity between human translators and c) poor standards that make consolidation and data sharing highly problematic. In a blog article describing a study of TAUS TM data consolidation, Common Sense Advisory describes this problem quite clearly: “Our recent MT research contended that many organizations will find that their TMs are not up to snuff — these manually created memories often carve into stone the aggregated work of lots of people of random capabilities, passed back and forth among LSPs over the years with little oversight or management.”

So what is the way forward, if we still want to see ongoing improvements?

I cannot really speak to what Google should do (they have lots of people smarter than me thinking about this), but I can share the basic elements of a strategy that I see is clearly working in producing continuously improving customized MT systems developed by Asia Online. It is much easier to improve customer specific systems than a universal baseline.

- Make sure that your foundation data is squeaky clean and of good linguistic quality (which means that linguistically competent humans are involved in assessing and approving all the data that is used in developing these systems).

- Normalize, clean and standardize your data on an ongoing and regular basis.

- Focus 75% of your development effort on data analysis and data preparation.

- Focus on a single domain.

- Understand that dealing with MT is more akin to interaction with an idiot-savant than with a competent and intelligent human translator.

- Involve competent linguists through various stages of the process to ensure that the right quality focused decisions are being made.

- Use linguistically informed development strategies as pure data based strategies are only likely to work to a point.

- For language pairs with very different syntax, morphology and grammar it will probably be necessary to add linguistic rules.

- Use linguists to identify error patterns and develop corrective strategies.

- Understand the content that you are going to translate and understand the quality that you need to deliver.

- Clean and simplify the source content before translation.

- And if quality really matters always use human validation and review.

All of this could be summarized simply as, make sure that your data is of high quality and use competent human linguists throughout the development process to improve the quality. This is true today and will be true tomorrow.

I suspect that effective man-machine collaborations will outperform pure data-driven approaches in future, as we are already seeing with both MT and search, and I would not be so quick to write off Google. I am sure that they can still find many ways to continue to improve. As long as the 6 billion people not working in the professional translation industry care about getting access to multilingual content, people will continue to try and improve MT. And if somebody tells you that machines can generally outperform or replace human translators (in 5 years no less), don’t believe them, (but understand there is great value in learning how to use MT technology more effectively anyway). We have quite a ways to go yet till we get there, if ever at all.

I recall a conversation with somebody at DARPA a few years ago, who said that the universal translator in Star Trek was the single most complex piece of technology on the Starship Enterprise, and that mankind was likely to invent everything else on the ship, before they had anything close to the translator that Captain Kirk used.

MT is actually still making great progress but it is wise to be always be skeptical of the hype. As we have seen lately, huge hype does not necessarily lead to success, as Google Buzz and Wave have shown.